Testing Django applications¶

Automated testing is an extremely useful bug-killing tool for the modern Web developer. You can use a collection of tests – a test suite – to solve, or avoid, a number of problems:

- When you’re writing new code, you can use tests to validate your code works as expected.

- When you’re refactoring or modifying old code, you can use tests to ensure your changes haven’t affected your application’s behavior unexpectedly.

Testing a Web application is a complex task, because a Web application is made of several layers of logic – from HTTP-level request handling, to form validation and processing, to template rendering. With Django’s test-execution framework and assorted utilities, you can simulate requests, insert test data, inspect your application’s output and generally verify your code is doing what it should be doing.

The best part is, it’s really easy.

This document is split into two primary sections. First, we explain how to write tests with Django. Then, we explain how to run them.

Writing tests¶

There are two primary ways to write tests with Django, corresponding to the two test frameworks that ship in the Python standard library. The two frameworks are:

Unit tests – tests that are expressed as methods on a Python class that subclasses

unittest.TestCaseor Django’s customizedTestCase. For example:import unittest class MyFuncTestCase(unittest.TestCase): def testBasic(self): a = ['larry', 'curly', 'moe'] self.assertEqual(my_func(a, 0), 'larry') self.assertEqual(my_func(a, 1), 'curly')

Doctests – tests that are embedded in your functions’ docstrings and are written in a way that emulates a session of the Python interactive interpreter. For example:

def my_func(a_list, idx): """ >>> a = ['larry', 'curly', 'moe'] >>> my_func(a, 0) 'larry' >>> my_func(a, 1) 'curly' """ return a_list[idx]

We’ll discuss choosing the appropriate test framework later, however, most experienced developers prefer unit tests. You can also use any other Python test framework, as we’ll explain in a bit.

Writing unit tests¶

Django’s unit tests use a Python standard library module: unittest. This

module defines tests in class-based approach.

unittest2

Python 2.7 introduced some major changes to the unittest library, adding some extremely useful features. To ensure that every Django project can benefit from these new features, Django ships with a copy of unittest2, a copy of the Python 2.7 unittest library, backported for Python 2.5 compatibility.

To access this library, Django provides the

django.utils.unittest module alias. If you are using Python

2.7, or you have installed unittest2 locally, Django will map the

alias to the installed version of the unittest library. Otherwise,

Django will use its own bundled version of unittest2.

To use this alias, simply use:

from django.utils import unittest

wherever you would have historically used:

import unittest

If you want to continue to use the base unittest library, you can – you just won’t get any of the nice new unittest2 features.

For a given Django application, the test runner looks for unit tests in two places:

- The

models.pyfile. The test runner looks for any subclass ofunittest.TestCasein this module. - A file called

tests.pyin the application directory – i.e., the directory that holdsmodels.py. Again, the test runner looks for any subclass ofunittest.TestCasein this module.

Here is an example unittest.TestCase subclass:

from django.utils import unittest

from myapp.models import Animal

class AnimalTestCase(unittest.TestCase):

def setUp(self):

self.lion = Animal.objects.create(name="lion", sound="roar")

self.cat = Animal.objects.create(name="cat", sound="meow")

def test_animals_can_speak(self):

"""Animals that can speak are correctly identified"""

self.assertEqual(self.lion.speak(), 'The lion says "roar"')

self.assertEqual(self.cat.speak(), 'The cat says "meow"')

When you run your tests, the default behavior of the test

utility is to find all the test cases (that is, subclasses of

unittest.TestCase) in models.py and tests.py, automatically

build a test suite out of those test cases, and run that suite.

There is a second way to define the test suite for a module: if you define a

function called suite() in either models.py or tests.py, the

Django test runner will use that function to construct the test suite for that

module. This follows the suggested organization for unit tests. See the

Python documentation for more details on how to construct a complex test

suite.

For more details about unittest, see the Python documentation.

Writing doctests¶

Doctests use Python’s standard doctest module, which searches your

docstrings for statements that resemble a session of the Python interactive

interpreter. A full explanation of how doctest works is out of the scope

of this document; read Python’s official documentation for the details.

What’s a docstring?

A good explanation of docstrings (and some guidelines for using them effectively) can be found in PEP 257:

A docstring is a string literal that occurs as the first statement in a module, function, class, or method definition. Such a docstring becomes the__doc__special attribute of that object.

For example, this function has a docstring that describes what it does:

def add_two(num):

"Return the result of adding two to the provided number."

return num + 2

Because tests often make great documentation, putting tests directly in your docstrings is an effective way to document and test your code.

As with unit tests, for a given Django application, the test runner looks for doctests in two places:

- The

models.pyfile. You can define module-level doctests and/or a doctest for individual models. It’s common practice to put application-level doctests in the module docstring and model-level doctests in the model docstrings. - A file called

tests.pyin the application directory – i.e., the directory that holdsmodels.py. This file is a hook for any and all doctests you want to write that aren’t necessarily related to models.

This example doctest is equivalent to the example given in the unittest section above:

# models.py

from django.db import models

class Animal(models.Model):

"""

An animal that knows how to make noise

# Create some animals

>>> lion = Animal.objects.create(name="lion", sound="roar")

>>> cat = Animal.objects.create(name="cat", sound="meow")

# Make 'em speak

>>> lion.speak()

'The lion says "roar"'

>>> cat.speak()

'The cat says "meow"'

"""

name = models.CharField(max_length=20)

sound = models.CharField(max_length=20)

def speak(self):

return 'The %s says "%s"' % (self.name, self.sound)

When you run your tests, the test runner will find this docstring, notice that portions of it look like an interactive Python session, and execute those lines while checking that the results match.

In the case of model tests, note that the test runner takes care of creating

its own test database. That is, any test that accesses a database – by

creating and saving model instances, for example – will not affect your

production database. However, the database is not refreshed between doctests,

so if your doctest requires a certain state you should consider flushing the

database or loading a fixture. (See the section on fixtures, below, for more

on this.) Note that to use this feature, the database user Django is connecting

as must have CREATE DATABASE rights.

For more details about doctest, see the Python documentation.

Which should I use?¶

Because Django supports both of the standard Python test frameworks, it’s up to you and your tastes to decide which one to use. You can even decide to use both.

For developers new to testing, however, this choice can seem confusing. Here, then, are a few key differences to help you decide which approach is right for you:

- If you’ve been using Python for a while,

doctestwill probably feel more “pythonic”. It’s designed to make writing tests as easy as possible, so it requires no overhead of writing classes or methods. You simply put tests in docstrings. This has the added advantage of serving as documentation (and correct documentation, at that!). However, while doctests are good for some simple example code, they are not very good if you want to produce either high quality, comprehensive tests or high quality documentation. Test failures are often difficult to debug as it can be unclear exactly why the test failed. Thus, doctests should generally be avoided and used primarily for documentation examples only. - The

unittestframework will probably feel very familiar to developers coming from Java.unittestis inspired by Java’s JUnit, so you’ll feel at home with this method if you’ve used JUnit or any test framework inspired by JUnit. - If you need to write a bunch of tests that share similar code, then

you’ll appreciate the

unittestframework’s organization around classes and methods. This makes it easy to abstract common tasks into common methods. The framework also supports explicit setup and/or cleanup routines, which give you a high level of control over the environment in which your test cases are run. - If you’re writing tests for Django itself, you should use

unittest.

Running tests¶

Once you’ve written tests, run them using the test command of

your project’s manage.py utility:

$ ./manage.py test

By default, this will run every test in every application in

INSTALLED_APPS. If you only want to run tests for a particular

application, add the application name to the command line. For example, if your

INSTALLED_APPS contains 'myproject.polls' and

'myproject.animals', you can run the myproject.animals unit tests alone

with this command:

$ ./manage.py test animals

Note that we used animals, not myproject.animals.

You can be even more specific by naming an individual test case. To

run a single test case in an application (for example, the

AnimalTestCase described in the “Writing unit tests” section), add

the name of the test case to the label on the command line:

$ ./manage.py test animals.AnimalTestCase

And it gets even more granular than that! To run a single test method inside a test case, add the name of the test method to the label:

$ ./manage.py test animals.AnimalTestCase.test_animals_can_speak

You can use the same rules if you’re using doctests. Django will use the

test label as a path to the test method or class that you want to run.

If your models.py or tests.py has a function with a doctest, or

class with a class-level doctest, you can invoke that test by appending the

name of the test method or class to the label:

$ ./manage.py test animals.classify

If you want to run the doctest for a specific method in a class, add the name of the method to the label:

$ ./manage.py test animals.Classifier.run

If you’re using a __test__ dictionary to specify doctests for a

module, Django will use the label as a key in the __test__ dictionary

for defined in models.py and tests.py.

Ctrl-C.If you press Ctrl-C while the tests are running, the test runner will

wait for the currently running test to complete and then exit gracefully.

During a graceful exit the test runner will output details of any test

failures, report on how many tests were run and how many errors and failures

were encountered, and destroy any test databases as usual. Thus pressing

Ctrl-C can be very useful if you forget to pass the --failfast

option, notice that some tests are unexpectedly failing, and want to get details

on the failures without waiting for the full test run to complete.

If you do not want to wait for the currently running test to finish, you

can press Ctrl-C a second time and the test run will halt immediately,

but not gracefully. No details of the tests run before the interruption will

be reported, and any test databases created by the run will not be destroyed.

Test with warnings enabled

It’s a good idea to run your tests with Python warnings enabled:

python -Wall manage.py test. The -Wall flag tells Python to

display deprecation warnings. Django, like many other Python libraries,

uses these warnings to flag when features are going away. It also might

flag areas in your code that aren’t strictly wrong but could benefit

from a better implementation.

Running tests outside the test runner¶

If you want to run tests outside of ./manage.py test – for example,

from a shell prompt – you will need to set up the test

environment first. Django provides a convenience method to do this:

>>> from django.test.utils import setup_test_environment

>>> setup_test_environment()

This convenience method sets up the test database, and puts other Django features into modes that allow for repeatable testing.

The call to setup_test_environment() is made

automatically as part of the setup of ./manage.py test. You only

need to manually invoke this method if you’re not using running your

tests via Django’s test runner.

The test database¶

Tests that require a database (namely, model tests) will not use your “real” (production) database. Separate, blank databases are created for the tests.

Regardless of whether the tests pass or fail, the test databases are destroyed when all the tests have been executed.

By default the test databases get their names by prepending test_

to the value of the NAME settings for the databases

defined in DATABASES. When using the SQLite database engine

the tests will by default use an in-memory database (i.e., the

database will be created in memory, bypassing the filesystem

entirely!). If you want to use a different database name, specify

TEST_NAME in the dictionary for any given database in

DATABASES.

Aside from using a separate database, the test runner will otherwise

use all of the same database settings you have in your settings file:

ENGINE, USER, HOST, etc. The test

database is created by the user specified by USER, so you’ll need

to make sure that the given user account has sufficient privileges to

create a new database on the system.

For fine-grained control over the character encoding of your test

database, use the TEST_CHARSET option. If you’re using

MySQL, you can also use the TEST_COLLATION option to

control the particular collation used by the test database. See the

settings documentation for details of these

advanced settings.

Testing master/slave configurations¶

If you’re testing a multiple database configuration with master/slave replication, this strategy of creating test databases poses a problem. When the test databases are created, there won’t be any replication, and as a result, data created on the master won’t be seen on the slave.

To compensate for this, Django allows you to define that a database is a test mirror. Consider the following (simplified) example database configuration:

DATABASES = {

'default': {

'ENGINE': 'django.db.backends.mysql',

'NAME': 'myproject',

'HOST': 'dbmaster',

# ... plus some other settings

},

'slave': {

'ENGINE': 'django.db.backends.mysql',

'NAME': 'myproject',

'HOST': 'dbslave',

'TEST_MIRROR': 'default'

# ... plus some other settings

}

}

In this setup, we have two database servers: dbmaster, described

by the database alias default, and dbslave described by the

alias slave. As you might expect, dbslave has been configured

by the database administrator as a read slave of dbmaster, so in

normal activity, any write to default will appear on slave.

If Django created two independent test databases, this would break any

tests that expected replication to occur. However, the slave

database has been configured as a test mirror (using the

TEST_MIRROR setting), indicating that under testing,

slave should be treated as a mirror of default.

When the test environment is configured, a test version of slave

will not be created. Instead the connection to slave

will be redirected to point at default. As a result, writes to

default will appear on slave – but because they are actually

the same database, not because there is data replication between the

two databases.

Controlling creation order for test databases¶

By default, Django will always create the default database first.

However, no guarantees are made on the creation order of any other

databases in your test setup.

If your database configuration requires a specific creation order, you

can specify the dependencies that exist using the

TEST_DEPENDENCIES setting. Consider the following

(simplified) example database configuration:

DATABASES = {

'default': {

# ... db settings

'TEST_DEPENDENCIES': ['diamonds']

},

'diamonds': {

# ... db settings

},

'clubs': {

# ... db settings

'TEST_DEPENDENCIES': ['diamonds']

},

'spades': {

# ... db settings

'TEST_DEPENDENCIES': ['diamonds','hearts']

},

'hearts': {

# ... db settings

'TEST_DEPENDENCIES': ['diamonds','clubs']

}

}

Under this configuration, the diamonds database will be created first,

as it is the only database alias without dependencies. The default and

clubs alias will be created next (although the order of creation of this

pair is not guaranteed); then hearts; and finally spades.

If there are any circular dependencies in the

TEST_DEPENDENCIES definition, an ImproperlyConfigured

exception will be raised.

Other test conditions¶

Regardless of the value of the DEBUG setting in your configuration

file, all Django tests run with DEBUG=False. This is to ensure that

the observed output of your code matches what will be seen in a production

setting.

Caches are not cleared after each test, and running “manage.py test fooapp” can insert data from the tests into the cache of a live system if you run your tests in production because, unlike databases, a separate “test cache” is not used. This behavior may change in the future.

Understanding the test output¶

When you run your tests, you’ll see a number of messages as the test runner

prepares itself. You can control the level of detail of these messages with the

verbosity option on the command line:

Creating test database...

Creating table myapp_animal

Creating table myapp_mineral

Loading 'initial_data' fixtures...

No fixtures found.

This tells you that the test runner is creating a test database, as described in the previous section.

Once the test database has been created, Django will run your tests. If everything goes well, you’ll see something like this:

----------------------------------------------------------------------

Ran 22 tests in 0.221s

OK

If there are test failures, however, you’ll see full details about which tests failed:

======================================================================

FAIL: Doctest: ellington.core.throttle.models

----------------------------------------------------------------------

Traceback (most recent call last):

File "/dev/django/test/doctest.py", line 2153, in runTest

raise self.failureException(self.format_failure(new.getvalue()))

AssertionError: Failed doctest test for myapp.models

File "/dev/myapp/models.py", line 0, in models

----------------------------------------------------------------------

File "/dev/myapp/models.py", line 14, in myapp.models

Failed example:

throttle.check("actor A", "action one", limit=2, hours=1)

Expected:

True

Got:

False

----------------------------------------------------------------------

Ran 2 tests in 0.048s

FAILED (failures=1)

A full explanation of this error output is beyond the scope of this document,

but it’s pretty intuitive. You can consult the documentation of Python’s

unittest library for details.

Note that the return code for the test-runner script is 1 for any number of failed and erroneous tests. If all the tests pass, the return code is 0. This feature is useful if you’re using the test-runner script in a shell script and need to test for success or failure at that level.

Speeding up the tests¶

In recent versions of Django, the default password hasher is rather slow by

design. If during your tests you are authenticating many users, you may want

to use a custom settings file and set the PASSWORD_HASHERS setting

to a faster hashing algorithm:

PASSWORD_HASHERS = (

'django.contrib.auth.hashers.MD5PasswordHasher',

)

Don’t forget to also include in PASSWORD_HASHERS any hashing

algorithm used in fixtures, if any.

Integration with coverage.py¶

Code coverage describes how much source code has been tested. It shows which parts of your code are being exercised by tests and which are not. It’s an important part of testing applications, so it’s strongly recommended to check the coverage of your tests.

Django can be easily integrated with coverage.py, a tool for measuring code

coverage of Python programs. First, install coverage.py. Next, run the

following from your project folder containing manage.py:

coverage run --source='.' manage.py test myapp

This runs your tests and collects coverage data of the executed files in your project. You can see a report of this data by typing following command:

coverage report

Note that some Django code was executed while running tests, but it is not

listed here because of the source flag passed to the previous command.

For more options like annotated HTML listings detailing missed lines, see the coverage.py docs.

Testing tools¶

Django provides a small set of tools that come in handy when writing tests.

The test client¶

The test client is a Python class that acts as a dummy Web browser, allowing you to test your views and interact with your Django-powered application programmatically.

Some of the things you can do with the test client are:

- Simulate GET and POST requests on a URL and observe the response – everything from low-level HTTP (result headers and status codes) to page content.

- Test that the correct view is executed for a given URL.

- Test that a given request is rendered by a given Django template, with a template context that contains certain values.

Note that the test client is not intended to be a replacement for Selenium or other “in-browser” frameworks. Django’s test client has a different focus. In short:

- Use Django’s test client to establish that the correct view is being called and that the view is collecting the correct context data.

- Use in-browser frameworks like Selenium to test rendered HTML and the

behavior of Web pages, namely JavaScript functionality. Django also

provides special support for those frameworks; see the section on

LiveServerTestCasefor more details.

A comprehensive test suite should use a combination of both test types.

Overview and a quick example¶

To use the test client, instantiate django.test.client.Client and retrieve

Web pages:

>>> from django.test.client import Client

>>> c = Client()

>>> response = c.post('/login/', {'username': 'john', 'password': 'smith'})

>>> response.status_code

200

>>> response = c.get('/customer/details/')

>>> response.content

'<!DOCTYPE html...'

As this example suggests, you can instantiate Client from within a session

of the Python interactive interpreter.

Note a few important things about how the test client works:

The test client does not require the Web server to be running. In fact, it will run just fine with no Web server running at all! That’s because it avoids the overhead of HTTP and deals directly with the Django framework. This helps make the unit tests run quickly.

When retrieving pages, remember to specify the path of the URL, not the whole domain. For example, this is correct:

>>> c.get('/login/')

This is incorrect:

>>> c.get('http://www.example.com/login/')

The test client is not capable of retrieving Web pages that are not powered by your Django project. If you need to retrieve other Web pages, use a Python standard library module such as

urlliborurllib2.To resolve URLs, the test client uses whatever URLconf is pointed-to by your

ROOT_URLCONFsetting.Although the above example would work in the Python interactive interpreter, some of the test client’s functionality, notably the template-related functionality, is only available while tests are running.

The reason for this is that Django’s test runner performs a bit of black magic in order to determine which template was loaded by a given view. This black magic (essentially a patching of Django’s template system in memory) only happens during test running.

By default, the test client will disable any CSRF checks performed by your site.

New in Django 1.2.2: Please see the release notesIf, for some reason, you want the test client to perform CSRF checks, you can create an instance of the test client that enforces CSRF checks. To do this, pass in the

enforce_csrf_checksargument when you construct your client:>>> from django.test import Client >>> csrf_client = Client(enforce_csrf_checks=True)

Making requests¶

Use the django.test.client.Client class to make requests. It requires no

arguments at time of construction:

-

class

Client¶ Once you have a

Clientinstance, you can call any of the following methods:-

get(path, data={}, follow=False, **extra)¶ Makes a GET request on the provided

pathand returns aResponseobject, which is documented below.The key-value pairs in the

datadictionary are used to create a GET data payload. For example:>>> c = Client() >>> c.get('/customers/details/', {'name': 'fred', 'age': 7})

...will result in the evaluation of a GET request equivalent to:

/customers/details/?name=fred&age=7

The

extrakeyword arguments parameter can be used to specify headers to be sent in the request. For example:>>> c = Client() >>> c.get('/customers/details/', {'name': 'fred', 'age': 7}, ... HTTP_X_REQUESTED_WITH='XMLHttpRequest')

...will send the HTTP header

HTTP_X_REQUESTED_WITHto the details view, which is a good way to test code paths that use thedjango.http.HttpRequest.is_ajax()method.CGI specification

The headers sent via

**extrashould follow CGI specification. For example, emulating a different “Host” header as sent in the HTTP request from the browser to the server should be passed asHTTP_HOST.If you already have the GET arguments in URL-encoded form, you can use that encoding instead of using the data argument. For example, the previous GET request could also be posed as:

>>> c = Client() >>> c.get('/customers/details/?name=fred&age=7')

If you provide a URL with both an encoded GET data and a data argument, the data argument will take precedence.

If you set

followtoTruethe client will follow any redirects and aredirect_chainattribute will be set in the response object containing tuples of the intermediate urls and status codes.If you had an url

/redirect_me/that redirected to/next/, that redirected to/final/, this is what you’d see:>>> response = c.get('/redirect_me/', follow=True) >>> response.redirect_chain [(u'http://testserver/next/', 302), (u'http://testserver/final/', 302)]

-

post(path, data={}, content_type=MULTIPART_CONTENT, follow=False, **extra)¶ Makes a POST request on the provided

pathand returns aResponseobject, which is documented below.The key-value pairs in the

datadictionary are used to submit POST data. For example:>>> c = Client() >>> c.post('/login/', {'name': 'fred', 'passwd': 'secret'})

...will result in the evaluation of a POST request to this URL:

/login/

...with this POST data:

name=fred&passwd=secret

If you provide

content_type(e.g. text/xml for an XML payload), the contents ofdatawill be sent as-is in the POST request, usingcontent_typein the HTTPContent-Typeheader.If you don’t provide a value for

content_type, the values indatawill be transmitted with a content type of multipart/form-data. In this case, the key-value pairs indatawill be encoded as a multipart message and used to create the POST data payload.To submit multiple values for a given key – for example, to specify the selections for a

<select multiple>– provide the values as a list or tuple for the required key. For example, this value ofdatawould submit three selected values for the field namedchoices:{'choices': ('a', 'b', 'd')}

Submitting files is a special case. To POST a file, you need only provide the file field name as a key, and a file handle to the file you wish to upload as a value. For example:

>>> c = Client() >>> f = open('wishlist.doc') >>> c.post('/customers/wishes/', {'name': 'fred', 'attachment': f}) >>> f.close()

(The name

attachmenthere is not relevant; use whatever name your file-processing code expects.)Note that if you wish to use the same file handle for multiple

post()calls then you will need to manually reset the file pointer between posts. The easiest way to do this is to manually close the file after it has been provided topost(), as demonstrated above.You should also ensure that the file is opened in a way that allows the data to be read. If your file contains binary data such as an image, this means you will need to open the file in

rb(read binary) mode.The

extraargument acts the same as forClient.get().If the URL you request with a POST contains encoded parameters, these parameters will be made available in the request.GET data. For example, if you were to make the request:

>>> c.post('/login/?visitor=true', {'name': 'fred', 'passwd': 'secret'})

... the view handling this request could interrogate request.POST to retrieve the username and password, and could interrogate request.GET to determine if the user was a visitor.

If you set

followtoTruethe client will follow any redirects and aredirect_chainattribute will be set in the response object containing tuples of the intermediate urls and status codes.

-

head(path, data={}, follow=False, **extra)¶ Makes a HEAD request on the provided

pathand returns aResponseobject. Useful for testing RESTful interfaces. Acts just likeClient.get()except it does not return a message body.If you set

followtoTruethe client will follow any redirects and aredirect_chainattribute will be set in the response object containing tuples of the intermediate urls and status codes.

-

options(path, data={}, follow=False, **extra)¶ Makes an OPTIONS request on the provided

pathand returns aResponseobject. Useful for testing RESTful interfaces.If you set

followtoTruethe client will follow any redirects and aredirect_chainattribute will be set in the response object containing tuples of the intermediate urls and status codes.The

extraargument acts the same as forClient.get().

-

put(path, data={}, content_type=MULTIPART_CONTENT, follow=False, **extra)¶ Makes a PUT request on the provided

pathand returns aResponseobject. Useful for testing RESTful interfaces. Acts just likeClient.post()except with the PUT request method.If you set

followtoTruethe client will follow any redirects and aredirect_chainattribute will be set in the response object containing tuples of the intermediate urls and status codes.

-

delete(path, follow=False, **extra)¶ Makes an DELETE request on the provided

pathand returns aResponseobject. Useful for testing RESTful interfaces.If you set

followtoTruethe client will follow any redirects and aredirect_chainattribute will be set in the response object containing tuples of the intermediate urls and status codes.The

extraargument acts the same as forClient.get().

-

login(**credentials)¶ If your site uses Django’s authentication system and you deal with logging in users, you can use the test client’s

login()method to simulate the effect of a user logging into the site.After you call this method, the test client will have all the cookies and session data required to pass any login-based tests that may form part of a view.

The format of the

credentialsargument depends on which authentication backend you’re using (which is configured by yourAUTHENTICATION_BACKENDSsetting). If you’re using the standard authentication backend provided by Django (ModelBackend),credentialsshould be the user’s username and password, provided as keyword arguments:>>> c = Client() >>> c.login(username='fred', password='secret') # Now you can access a view that's only available to logged-in users.

If you’re using a different authentication backend, this method may require different credentials. It requires whichever credentials are required by your backend’s

authenticate()method.login()returnsTrueif it the credentials were accepted and login was successful.Finally, you’ll need to remember to create user accounts before you can use this method. As we explained above, the test runner is executed using a test database, which contains no users by default. As a result, user accounts that are valid on your production site will not work under test conditions. You’ll need to create users as part of the test suite – either manually (using the Django model API) or with a test fixture. Remember that if you want your test user to have a password, you can’t set the user’s password by setting the password attribute directly – you must use the

set_password()function to store a correctly hashed password. Alternatively, you can use thecreate_user()helper method to create a new user with a correctly hashed password.

-

logout()¶ If your site uses Django’s authentication system, the

logout()method can be used to simulate the effect of a user logging out of your site.After you call this method, the test client will have all the cookies and session data cleared to defaults. Subsequent requests will appear to come from an AnonymousUser.

-

Testing responses¶

The get() and post() methods both return a Response object. This

Response object is not the same as the HttpResponse object returned

Django views; the test response object has some additional data useful for

test code to verify.

Specifically, a Response object has the following attributes:

-

class

Response¶ -

client¶ The test client that was used to make the request that resulted in the response.

-

content¶ The body of the response, as a string. This is the final page content as rendered by the view, or any error message.

-

context¶ The template

Contextinstance that was used to render the template that produced the response content.If the rendered page used multiple templates, then

contextwill be a list ofContextobjects, in the order in which they were rendered.Regardless of the number of templates used during rendering, you can retrieve context values using the

[]operator. For example, the context variablenamecould be retrieved using:>>> response = client.get('/foo/') >>> response.context['name'] 'Arthur'

-

request¶ The request data that stimulated the response.

-

status_code¶ The HTTP status of the response, as an integer. See RFC 2616#section-10 for a full list of HTTP status codes.

New in Django 1.3: Please see the release notes-

templates¶ A list of

Templateinstances used to render the final content, in the order they were rendered. For each template in the list, usetemplate.nameto get the template’s file name, if the template was loaded from a file. (The name is a string such as'admin/index.html'.)

-

You can also use dictionary syntax on the response object to query the value

of any settings in the HTTP headers. For example, you could determine the

content type of a response using response['Content-Type'].

Exceptions¶

If you point the test client at a view that raises an exception, that exception

will be visible in the test case. You can then use a standard try ... except

block or assertRaises() to test for exceptions.

The only exceptions that are not visible to the test client are Http404,

PermissionDenied and SystemExit. Django catches these exceptions

internally and converts them into the appropriate HTTP response codes. In these

cases, you can check response.status_code in your test.

Persistent state¶

The test client is stateful. If a response returns a cookie, then that cookie

will be stored in the test client and sent with all subsequent get() and

post() requests.

Expiration policies for these cookies are not followed. If you want a cookie

to expire, either delete it manually or create a new Client instance (which

will effectively delete all cookies).

A test client has two attributes that store persistent state information. You can access these properties as part of a test condition.

A Python

SimpleCookieobject, containing the current values of all the client cookies. See the documentation of theCookiemodule for more.

-

Client.session¶ A dictionary-like object containing session information. See the session documentation for full details.

To modify the session and then save it, it must be stored in a variable first (because a new

SessionStoreis created every time this property is accessed):def test_something(self): session = self.client.session session['somekey'] = 'test' session.save()

Example¶

The following is a simple unit test using the test client:

from django.utils import unittest

from django.test.client import Client

class SimpleTest(unittest.TestCase):

def setUp(self):

# Every test needs a client.

self.client = Client()

def test_details(self):

# Issue a GET request.

response = self.client.get('/customer/details/')

# Check that the response is 200 OK.

self.assertEqual(response.status_code, 200)

# Check that the rendered context contains 5 customers.

self.assertEqual(len(response.context['customers']), 5)

The request factory¶

-

class

RequestFactory¶

The RequestFactory shares the same API as

the test client. However, instead of behaving like a browser, the

RequestFactory provides a way to generate a request instance that can

be used as the first argument to any view. This means you can test a

view function the same way as you would test any other function – as

a black box, with exactly known inputs, testing for specific outputs.

The API for the RequestFactory is a slightly

restricted subset of the test client API:

- It only has access to the HTTP methods

get(),post(),put(),delete(),head()andoptions(). - These methods accept all the same arguments except for

follows. Since this is just a factory for producing requests, it’s up to you to handle the response. - It does not support middleware. Session and authentication attributes must be supplied by the test itself if required for the view to function properly.

Example¶

The following is a simple unit test using the request factory:

from django.utils import unittest

from django.test.client import RequestFactory

class SimpleTest(unittest.TestCase):

def setUp(self):

# Every test needs access to the request factory.

self.factory = RequestFactory()

def test_details(self):

# Create an instance of a GET request.

request = self.factory.get('/customer/details')

# Test my_view() as if it were deployed at /customer/details

response = my_view(request)

self.assertEqual(response.status_code, 200)

TestCase¶

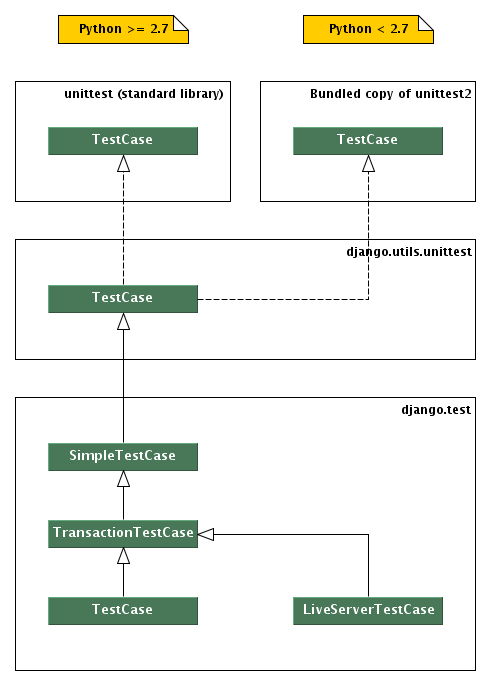

Normal Python unit test classes extend a base class of

unittest.TestCase. Django provides a few extensions of this base class:

Hierarchy of Django unit testing classes

-

class

TestCase¶

This class provides some additional capabilities that can be useful for testing Web sites.

Converting a normal unittest.TestCase to a Django TestCase is

easy: just change the base class of your test from unittest.TestCase to

django.test.TestCase. All of the standard Python unit test

functionality will continue to be available, but it will be augmented with some

useful additions, including:

- Automatic loading of fixtures.

- Wraps each test in a transaction.

- Creates a TestClient instance.

- Django-specific assertions for testing for things like redirection and form errors.

TestCase inherits from TransactionTestCase.

-

class

TransactionTestCase¶

Django TestCase classes make use of database transaction facilities, if

available, to speed up the process of resetting the database to a known state

at the beginning of each test. A consequence of this, however, is that the

effects of transaction commit and rollback cannot be tested by a Django

TestCase class. If your test requires testing of such transactional

behavior, you should use a Django TransactionTestCase.

TransactionTestCase and TestCase are identical except for the manner

in which the database is reset to a known state and the ability for test code

to test the effects of commit and rollback. A TransactionTestCase resets

the database before the test runs by truncating all tables and reloading

initial data. A TransactionTestCase may call commit and rollback and

observe the effects of these calls on the database.

A TestCase, on the other hand, does not truncate tables and reload initial

data at the beginning of a test. Instead, it encloses the test code in a

database transaction that is rolled back at the end of the test. It also

prevents the code under test from issuing any commit or rollback operations

on the database, to ensure that the rollback at the end of the test restores

the database to its initial state. In order to guarantee that all TestCase

code starts with a clean database, the Django test runner runs all TestCase

tests first, before any other tests (e.g. doctests) that may alter the

database without restoring it to its original state.

When running on a database that does not support rollback (e.g. MySQL with the

MyISAM storage engine), TestCase falls back to initializing the database

by truncating tables and reloading initial data.

TransactionTestCase inherits from SimpleTestCase.

Note

The TestCase use of rollback to un-do the effects of the test code

may reveal previously-undetected errors in test code. For example,

test code that assumes primary keys values will be assigned starting at

one may find that assumption no longer holds true when rollbacks instead

of table truncation are being used to reset the database. Similarly,

the reordering of tests so that all TestCase classes run first may

reveal unexpected dependencies on test case ordering. In such cases a

quick fix is to switch the TestCase to a TransactionTestCase.

A better long-term fix, that allows the test to take advantage of the

speed benefit of TestCase, is to fix the underlying test problem.

-

class

SimpleTestCase¶

A very thin subclass of unittest.TestCase, it extends it with some

basic functionality like:

- Saving and restoring the Python warning machinery state.

- Checking that a callable

raises a certain exception. Testing form field rendering.- Testing server HTML responses for the presence/lack of a given fragment.

- The ability to run tests with modified settings

If you need any of the other more complex and heavyweight Django-specific features like:

- Using the

clientClient. - Testing or using the ORM.

- Database

fixtures. - Custom test-time

URL maps. - Test skipping based on database backend features.

- The remaining specialized assert* methods.

then you should use TransactionTestCase or

TestCase instead.

SimpleTestCase inherits from django.utils.unittest.TestCase.

Default test client¶

-

TestCase.client¶

Every test case in a django.test.TestCase instance has access to an

instance of a Django test client. This client can be accessed as

self.client. This client is recreated for each test, so you don’t have to

worry about state (such as cookies) carrying over from one test to another.

This means, instead of instantiating a Client in each test:

from django.utils import unittest

from django.test.client import Client

class SimpleTest(unittest.TestCase):

def test_details(self):

client = Client()

response = client.get('/customer/details/')

self.assertEqual(response.status_code, 200)

def test_index(self):

client = Client()

response = client.get('/customer/index/')

self.assertEqual(response.status_code, 200)

...you can just refer to self.client, like so:

from django.test import TestCase

class SimpleTest(TestCase):

def test_details(self):

response = self.client.get('/customer/details/')

self.assertEqual(response.status_code, 200)

def test_index(self):

response = self.client.get('/customer/index/')

self.assertEqual(response.status_code, 200)

Customizing the test client¶

-

TestCase.client_class¶

If you want to use a different Client class (for example, a subclass

with customized behavior), use the client_class class

attribute:

from django.test import TestCase

from django.test.client import Client

class MyTestClient(Client):

# Specialized methods for your environment...

class MyTest(TestCase):

client_class = MyTestClient

def test_my_stuff(self):

# Here self.client is an instance of MyTestClient...

Fixture loading¶

-

TestCase.fixtures¶

A test case for a database-backed Web site isn’t much use if there isn’t any

data in the database. To make it easy to put test data into the database,

Django’s custom TestCase class provides a way of loading fixtures.

A fixture is a collection of data that Django knows how to import into a database. For example, if your site has user accounts, you might set up a fixture of fake user accounts in order to populate your database during tests.

The most straightforward way of creating a fixture is to use the

manage.py dumpdata command. This assumes you

already have some data in your database. See the dumpdata

documentation for more details.

Note

If you’ve ever run manage.py syncdb, you’ve

already used a fixture without even knowing it! When you call

syncdb in the database for the first time, Django

installs a fixture called initial_data. This gives you a way

of populating a new database with any initial data, such as a

default set of categories.

Fixtures with other names can always be installed manually using

the manage.py loaddata command.

Initial SQL data and testing

Django provides a second way to insert initial data into models – the custom SQL hook. However, this technique cannot be used to provide initial data for testing purposes. Django’s test framework flushes the contents of the test database after each test; as a result, any data added using the custom SQL hook will be lost.

Once you’ve created a fixture and placed it in a fixtures directory in one

of your INSTALLED_APPS, you can use it in your unit tests by

specifying a fixtures class attribute on your django.test.TestCase

subclass:

from django.test import TestCase

from myapp.models import Animal

class AnimalTestCase(TestCase):

fixtures = ['mammals.json', 'birds']

def setUp(self):

# Test definitions as before.

call_setup_methods()

def testFluffyAnimals(self):

# A test that uses the fixtures.

call_some_test_code()

Here’s specifically what will happen:

- At the start of each test case, before

setUp()is run, Django will flush the database, returning the database to the state it was in directly aftersyncdbwas called. - Then, all the named fixtures are installed. In this example, Django will

install any JSON fixture named

mammals, followed by any fixture namedbirds. See theloaddatadocumentation for more details on defining and installing fixtures.

This flush/load procedure is repeated for each test in the test case, so you can be certain that the outcome of a test will not be affected by another test, or by the order of test execution.

URLconf configuration¶

-

TestCase.urls¶

If your application provides views, you may want to include tests that use the test client to exercise those views. However, an end user is free to deploy the views in your application at any URL of their choosing. This means that your tests can’t rely upon the fact that your views will be available at a particular URL.

In order to provide a reliable URL space for your test,

django.test.TestCase provides the ability to customize the URLconf

configuration for the duration of the execution of a test suite. If your

TestCase instance defines an urls attribute, the TestCase will use

the value of that attribute as the ROOT_URLCONF for the duration

of that test.

For example:

from django.test import TestCase

class TestMyViews(TestCase):

urls = 'myapp.test_urls'

def testIndexPageView(self):

# Here you'd test your view using ``Client``.

call_some_test_code()

This test case will use the contents of myapp.test_urls as the

URLconf for the duration of the test case.

Multi-database support¶

-

TestCase.multi_db¶

Django sets up a test database corresponding to every database that is

defined in the DATABASES definition in your settings

file. However, a big part of the time taken to run a Django TestCase

is consumed by the call to flush that ensures that you have a

clean database at the start of each test run. If you have multiple

databases, multiple flushes are required (one for each database),

which can be a time consuming activity – especially if your tests

don’t need to test multi-database activity.

As an optimization, Django only flushes the default database at

the start of each test run. If your setup contains multiple databases,

and you have a test that requires every database to be clean, you can

use the multi_db attribute on the test suite to request a full

flush.

For example:

class TestMyViews(TestCase):

multi_db = True

def testIndexPageView(self):

call_some_test_code()

This test case will flush all the test databases before running

testIndexPageView.

Overriding settings¶

-

TestCase.settings()¶

For testing purposes it’s often useful to change a setting temporarily and

revert to the original value after running the testing code. For this use case

Django provides a standard Python context manager (see PEP 343)

settings(), which can be used like this:

from django.test import TestCase

class LoginTestCase(TestCase):

def test_login(self):

# First check for the default behavior

response = self.client.get('/sekrit/')

self.assertRedirects(response, '/accounts/login/?next=/sekrit/')

# Then override the LOGIN_URL setting

with self.settings(LOGIN_URL='/other/login/'):

response = self.client.get('/sekrit/')

self.assertRedirects(response, '/other/login/?next=/sekrit/')

This example will override the LOGIN_URL setting for the code

in the with block and reset its value to the previous state afterwards.

-

override_settings()¶

In case you want to override a setting for just one test method or even the

whole TestCase class, Django provides the

override_settings() decorator (see PEP 318). It’s

used like this:

from django.test import TestCase

from django.test.utils import override_settings

class LoginTestCase(TestCase):

@override_settings(LOGIN_URL='/other/login/')

def test_login(self):

response = self.client.get('/sekrit/')

self.assertRedirects(response, '/other/login/?next=/sekrit/')

The decorator can also be applied to test case classes:

from django.test import TestCase

from django.test.utils import override_settings

class LoginTestCase(TestCase):

def test_login(self):

response = self.client.get('/sekrit/')

self.assertRedirects(response, '/other/login/?next=/sekrit/')

LoginTestCase = override_settings(LOGIN_URL='/other/login/')(LoginTestCase)

Note

When given a class, the decorator modifies the class directly and

returns it; it doesn’t create and return a modified copy of it. So if

you try to tweak the above example to assign the return value to a

different name than LoginTestCase, you may be surprised to find that

the original LoginTestCase is still equally affected by the

decorator.

On Python 2.6 and higher you can also use the well known decorator syntax to decorate the class:

from django.test import TestCase

from django.test.utils import override_settings

@override_settings(LOGIN_URL='/other/login/')

class LoginTestCase(TestCase):

def test_login(self):

response = self.client.get('/sekrit/')

self.assertRedirects(response, '/other/login/?next=/sekrit/')

Note

When overriding settings, make sure to handle the cases in which your app’s

code uses a cache or similar feature that retains state even if the

setting is changed. Django provides the

django.test.signals.setting_changed signal that lets you register

callbacks to clean up and otherwise reset state when settings are changed.

Note that this signal isn’t currently used by Django itself, so changing

built-in settings may not yield the results you expect.

Emptying the test outbox¶

If you use Django’s custom TestCase class, the test runner will clear the

contents of the test email outbox at the start of each test case.

For more detail on email services during tests, see Email services.

Assertions¶

msg_prefix argument.As Python’s normal unittest.TestCase class implements assertion methods

such as assertTrue() and

assertEqual(), Django’s custom TestCase class

provides a number of custom assertion methods that are useful for testing Web

applications:

The failure messages given by most of these assertion methods can be customized

with the msg_prefix argument. This string will be prefixed to any failure

message generated by the assertion. This allows you to provide additional

details that may help you to identify the location and cause of an failure in

your test suite.

-

SimpleTestCase.assertRaisesMessage(expected_exception, expected_message, callable_obj=None, *args, **kwargs)¶ - New in Django 1.4: Please see the release notes

Asserts that execution of callable

callable_objraised theexpected_exceptionexception and that such exception has anexpected_messagerepresentation. Any other outcome is reported as a failure. Similar to unittest’sassertRaisesRegexp()with the difference thatexpected_messageisn’t a regular expression.

-

SimpleTestCase.assertFieldOutput(self, fieldclass, valid, invalid, field_args=None, field_kwargs=None, empty_value=u'')¶ - New in Django 1.4: Please see the release notes

Asserts that a form field behaves correctly with various inputs.

Parameters: - fieldclass – the class of the field to be tested.

- valid – a dictionary mapping valid inputs to their expected cleaned values.

- invalid – a dictionary mapping invalid inputs to one or more raised error messages.

- field_args – the args passed to instantiate the field.

- field_kwargs – the kwargs passed to instantiate the field.

- empty_value – the expected clean output for inputs in

EMPTY_VALUES.

For example, the following code tests that an

EmailFieldaccepts “a@a.com” as a valid email address, but rejects “aaa” with a reasonable error message:self.assertFieldOutput(EmailField, {'a@a.com': 'a@a.com'}, {'aaa': [u'Enter a valid e-mail address.']})

-

TestCase.assertContains(response, text, count=None, status_code=200, msg_prefix='', html=False)¶ Asserts that a

Responseinstance produced the givenstatus_codeand thattextappears in the content of the response. Ifcountis provided,textmust occur exactlycounttimes in the response.New in Django 1.4: Please see the release notesSet

htmltoTrueto handletextas HTML. The comparison with the response content will be based on HTML semantics instead of character-by-character equality. Whitespace is ignored in most cases, attribute ordering is not significant. SeeassertHTMLEqual()for more details.

-

TestCase.assertNotContains(response, text, status_code=200, msg_prefix='', html=False)¶ Asserts that a

Responseinstance produced the givenstatus_codeand thattextdoes not appears in the content of the response.New in Django 1.4: Please see the release notesSet

htmltoTrueto handletextas HTML. The comparison with the response content will be based on HTML semantics instead of character-by-character equality. Whitespace is ignored in most cases, attribute ordering is not significant. SeeassertHTMLEqual()for more details.

-

TestCase.assertFormError(response, form, field, errors, msg_prefix='')¶ Asserts that a field on a form raises the provided list of errors when rendered on the form.

formis the name theForminstance was given in the template context.fieldis the name of the field on the form to check. Iffieldhas a value ofNone, non-field errors (errors you can access viaform.non_field_errors()) will be checked.errorsis an error string, or a list of error strings, that are expected as a result of form validation.

-

TestCase.assertTemplateUsed(response, template_name, msg_prefix='')¶ Asserts that the template with the given name was used in rendering the response.

The name is a string such as

'admin/index.html'.New in Django 1.4: Please see the release notesYou can use this as a context manager, like this:

# This is necessary in Python 2.5 to enable the with statement. # In 2.6 and up, it's not necessary. from __future__ import with_statement with self.assertTemplateUsed('index.html'): render_to_string('index.html') with self.assertTemplateUsed(template_name='index.html'): render_to_string('index.html')

-

TestCase.assertTemplateNotUsed(response, template_name, msg_prefix='')¶ Asserts that the template with the given name was not used in rendering the response.

New in Django 1.4: Please see the release notesYou can use this as a context manager in the same way as

assertTemplateUsed().

-

TestCase.assertRedirects(response, expected_url, status_code=302, target_status_code=200, msg_prefix='')¶ Asserts that the response return a

status_coderedirect status, it redirected toexpected_url(including any GET data), and the final page was received withtarget_status_code.If your request used the

followargument, theexpected_urlandtarget_status_codewill be the url and status code for the final point of the redirect chain.

-

TestCase.assertQuerysetEqual(qs, values, transform=repr, ordered=True)¶ - New in Django 1.3: Please see the release notes

Asserts that a queryset

qsreturns a particular list of valuesvalues.The comparison of the contents of

qsandvaluesis performed using the functiontransform; by default, this means that therepr()of each value is compared. Any other callable can be used ifrepr()doesn’t provide a unique or helpful comparison.By default, the comparison is also ordering dependent. If

qsdoesn’t provide an implicit ordering, you can set theorderedparameter toFalse, which turns the comparison into a Python set comparison.Changed in Django 1.4: Theorderedparameter is new in version 1.4. In earlier versions, you would need to ensure the queryset is ordered consistently, possibly via an explicitorder_by()call on the queryset prior to comparison.

-

TestCase.assertNumQueries(num, func, *args, **kwargs)¶ - New in Django 1.3: Please see the release notes

Asserts that when

funcis called with*argsand**kwargsthatnumdatabase queries are executed.If a

"using"key is present inkwargsit is used as the database alias for which to check the number of queries. If you wish to call a function with ausingparameter you can do it by wrapping the call with alambdato add an extra parameter:self.assertNumQueries(7, lambda: my_function(using=7))

If you’re using Python 2.5 or greater you can also use this as a context manager:

# This is necessary in Python 2.5 to enable the with statement, in 2.6 # and up it is no longer necessary. from __future__ import with_statement with self.assertNumQueries(2): Person.objects.create(name="Aaron") Person.objects.create(name="Daniel")

-

SimpleTestCase.assertHTMLEqual(html1, html2, msg=None)¶ - New in Django 1.4: Please see the release notes

Asserts that the strings

html1andhtml2are equal. The comparison is based on HTML semantics. The comparison takes following things into account:- Whitespace before and after HTML tags is ignored.

- All types of whitespace are considered equivalent.

- All open tags are closed implicitly, e.g. when a surrounding tag is closed or the HTML document ends.

- Empty tags are equivalent to their self-closing version.

- The ordering of attributes of an HTML element is not significant.

- Attributes without an argument are equal to attributes that equal in name and value (see the examples).

The following examples are valid tests and don’t raise any

AssertionError:self.assertHTMLEqual('<p>Hello <b>world!</p>', '''<p> Hello <b>world! <b/> </p>''') self.assertHTMLEqual( '<input type="checkbox" checked="checked" id="id_accept_terms" />', '<input id="id_accept_terms" type='checkbox' checked>')html1andhtml2must be valid HTML. AnAssertionErrorwill be raised if one of them cannot be parsed.

-

SimpleTestCase.assertHTMLNotEqual(html1, html2, msg=None)¶ - New in Django 1.4: Please see the release notes

Asserts that the strings

html1andhtml2are not equal. The comparison is based on HTML semantics. SeeassertHTMLEqual()for details.html1andhtml2must be valid HTML. AnAssertionErrorwill be raised if one of them cannot be parsed.

Email services¶

If any of your Django views send email using Django’s email functionality, you probably don’t want to send email each time you run a test using that view. For this reason, Django’s test runner automatically redirects all Django-sent email to a dummy outbox. This lets you test every aspect of sending email – from the number of messages sent to the contents of each message – without actually sending the messages.

The test runner accomplishes this by transparently replacing the normal email backend with a testing backend. (Don’t worry – this has no effect on any other email senders outside of Django, such as your machine’s mail server, if you’re running one.)

-

django.core.mail.outbox¶

During test running, each outgoing email is saved in

django.core.mail.outbox. This is a simple list of all

EmailMessage instances that have been sent.

The outbox attribute is a special attribute that is created only when

the locmem email backend is used. It doesn’t normally exist as part of the

django.core.mail module and you can’t import it directly. The code

below shows how to access this attribute correctly.

Here’s an example test that examines django.core.mail.outbox for length

and contents:

from django.core import mail

from django.test import TestCase

class EmailTest(TestCase):

def test_send_email(self):

# Send message.

mail.send_mail('Subject here', 'Here is the message.',

'from@example.com', ['to@example.com'],

fail_silently=False)

# Test that one message has been sent.

self.assertEqual(len(mail.outbox), 1)

# Verify that the subject of the first message is correct.

self.assertEqual(mail.outbox[0].subject, 'Subject here')

As noted previously, the test outbox is emptied

at the start of every test in a Django TestCase. To empty the outbox

manually, assign the empty list to mail.outbox:

from django.core import mail

# Empty the test outbox

mail.outbox = []

Skipping tests¶

The unittest library provides the @skipIf and

@skipUnless decorators to allow you to skip tests

if you know ahead of time that those tests are going to fail under certain

conditions.

For example, if your test requires a particular optional library in order to

succeed, you could decorate the test case with @skipIf. Then, the test runner will report that the test wasn’t

executed and why, instead of failing the test or omitting the test altogether.

To supplement these test skipping behaviors, Django provides two additional skip decorators. Instead of testing a generic boolean, these decorators check the capabilities of the database, and skip the test if the database doesn’t support a specific named feature.

The decorators use a string identifier to describe database features.

This string corresponds to attributes of the database connection

features class. See BaseDatabaseFeatures

class for a full list of database features that can be used as a basis

for skipping tests.

-

skipIfDBFeature(feature_name_string)¶

Skip the decorated test if the named database feature is supported.

For example, the following test will not be executed if the database supports transactions (e.g., it would not run under PostgreSQL, but it would under MySQL with MyISAM tables):

class MyTests(TestCase):

@skipIfDBFeature('supports_transactions')

def test_transaction_behavior(self):

# ... conditional test code

-

skipUnlessDBFeature(feature_name_string)¶

Skip the decorated test if the named database feature is not supported.

For example, the following test will only be executed if the database supports transactions (e.g., it would run under PostgreSQL, but not under MySQL with MyISAM tables):

class MyTests(TestCase):

@skipUnlessDBFeature('supports_transactions')

def test_transaction_behavior(self):

# ... conditional test code

Live test server¶

-

class

LiveServerTestCase¶

LiveServerTestCase does basically the same as

TransactionTestCase with one extra feature: it launches a

live Django server in the background on setup, and shuts it down on teardown.

This allows the use of automated test clients other than the

Django dummy client such as, for example, the Selenium

client, to execute a series of functional tests inside a browser and simulate a

real user’s actions.

By default the live server’s address is ‘localhost:8081’ and the full URL

can be accessed during the tests with self.live_server_url. If you’d like

to change the default address (in the case, for example, where the 8081 port is

already taken) then you may pass a different one to the test command

via the --liveserver option, for example:

./manage.py test --liveserver=localhost:8082

Another way of changing the default server address is by setting the DJANGO_LIVE_TEST_SERVER_ADDRESS environment variable somewhere in your code (for example, in a custom test runner):

import os

os.environ['DJANGO_LIVE_TEST_SERVER_ADDRESS'] = 'localhost:8082'

In the case where the tests are run by multiple processes in parallel (for example, in the context of several simultaneous continuous integration builds), the processes will compete for the same address, and therefore your tests might randomly fail with an “Address already in use” error. To avoid this problem, you can pass a comma-separated list of ports or ranges of ports (at least as many as the number of potential parallel processes). For example:

./manage.py test --liveserver=localhost:8082,8090-8100,9000-9200,7041

Then, during test execution, each new live test server will try every specified port until it finds one that is free and takes it.

To demonstrate how to use LiveServerTestCase, let’s write a simple Selenium

test. First of all, you need to install the selenium package into your

Python path:

pip install selenium

Then, add a LiveServerTestCase-based test to your app’s tests module

(for example: myapp/tests.py). The code for this test may look as follows:

from django.test import LiveServerTestCase

from selenium.webdriver.firefox.webdriver import WebDriver

class MySeleniumTests(LiveServerTestCase):

fixtures = ['user-data.json']

@classmethod

def setUpClass(cls):

cls.selenium = WebDriver()

super(MySeleniumTests, cls).setUpClass()

@classmethod

def tearDownClass(cls):

cls.selenium.quit()

super(MySeleniumTests, cls).tearDownClass()

def test_login(self):

self.selenium.get('%s%s' % (self.live_server_url, '/login/'))

username_input = self.selenium.find_element_by_name("username")

username_input.send_keys('myuser')

password_input = self.selenium.find_element_by_name("password")

password_input.send_keys('secret')

self.selenium.find_element_by_xpath('//input[@value="Log in"]').click()

Finally, you may run the test as follows:

./manage.py test myapp.MySeleniumTests.test_login

This example will automatically open Firefox then go to the login page, enter the credentials and press the “Log in” button. Selenium offers other drivers in case you do not have Firefox installed or wish to use another browser. The example above is just a tiny fraction of what the Selenium client can do; check out the full reference for more details.

Note

LiveServerTestCase makes use of the staticfiles contrib app so you’ll need to have your project configured

accordingly (in particular by setting STATIC_URL).

Note

When using an in-memory SQLite database to run the tests, the same database connection will be shared by two threads in parallel: the thread in which the live server is run and the thread in which the test case is run. It’s important to prevent simultaneous database queries via this shared connection by the two threads, as that may sometimes randomly cause the tests to fail. So you need to ensure that the two threads don’t access the database at the same time. In particular, this means that in some cases (for example, just after clicking a link or submitting a form), you might need to check that a response is received by Selenium and that the next page is loaded before proceeding with further test execution. Do this, for example, by making Selenium wait until the <body> HTML tag is found in the response (requires Selenium > 2.13):

def test_login(self):

from selenium.webdriver.support.wait import WebDriverWait

...

self.selenium.find_element_by_xpath('//input[@value="Log in"]').click()

# Wait until the response is received

WebDriverWait(self.selenium, timeout).until(

lambda driver: driver.find_element_by_tag_name('body'), timeout=10)

The tricky thing here is that there’s really no such thing as a “page load,” especially in modern Web apps that generate HTML dynamically after the server generates the initial document. So, simply checking for the presence of <body> in the response might not necessarily be appropriate for all use cases. Please refer to the Selenium FAQ and Selenium documentation for more information.

Using different testing frameworks¶

Clearly, doctest and unittest are not the only Python testing

frameworks. While Django doesn’t provide explicit support for alternative

frameworks, it does provide a way to invoke tests constructed for an

alternative framework as if they were normal Django tests.

When you run ./manage.py test, Django looks at the TEST_RUNNER

setting to determine what to do. By default, TEST_RUNNER points to

'django.test.simple.DjangoTestSuiteRunner'. This class defines the default Django

testing behavior. This behavior involves:

- Performing global pre-test setup.

- Looking for unit tests and doctests in the

models.pyandtests.pyfiles in each installed application. - Creating the test databases.

- Running

syncdbto install models and initial data into the test databases. - Running the unit tests and doctests that are found.

- Destroying the test databases.

- Performing global post-test teardown.

If you define your own test runner class and point TEST_RUNNER at

that class, Django will execute your test runner whenever you run

./manage.py test. In this way, it is possible to use any test framework

that can be executed from Python code, or to modify the Django test execution

process to satisfy whatever testing requirements you may have.

Defining a test runner¶

A test runner is a class defining a run_tests() method. Django ships

with a DjangoTestSuiteRunner class that defines the default Django

testing behavior. This class defines the run_tests() entry point,

plus a selection of other methods that are used to by run_tests() to

set up, execute and tear down the test suite.

-

class

DjangoTestSuiteRunner(verbosity=1, interactive=True, failfast=True, **kwargs)¶ verbositydetermines the amount of notification and debug information that will be printed to the console;0is no output,1is normal output, and2is verbose output.If

interactiveisTrue, the test suite has permission to ask the user for instructions when the test suite is executed. An example of this behavior would be asking for permission to delete an existing test database. IfinteractiveisFalse, the test suite must be able to run without any manual intervention.If

failfastisTrue, the test suite will stop running after the first test failure is detected.Django will, from time to time, extend the capabilities of the test runner by adding new arguments. The

**kwargsdeclaration allows for this expansion. If you subclassDjangoTestSuiteRunneror write your own test runner, ensure accept and handle the**kwargsparameter.New in Django 1.4: Please see the release notesYour test runner may also define additional command-line options. If you add an

option_listattribute to a subclassed test runner, those options will be added to the list of command-line options that thetestcommand can use.

Attributes¶

-

DjangoTestSuiteRunner.option_list¶ - New in Django 1.4: Please see the release notes

This is the tuple of

optparseoptions which will be fed into the management command’sOptionParserfor parsing arguments. See the documentation for Python’soptparsemodule for more details.

Methods¶

-

DjangoTestSuiteRunner.run_tests(test_labels, extra_tests=None, **kwargs)¶ Run the test suite.

test_labelsis a list of strings describing the tests to be run. A test label can take one of three forms:app.TestCase.test_method– Run a single test method in a test case.app.TestCase– Run all the test methods in a test case.app– Search for and run all tests in the named application.

If

test_labelshas a value ofNone, the test runner should run search for tests in all the applications inINSTALLED_APPS.extra_testsis a list of extraTestCaseinstances to add to the suite that is executed by the test runner. These extra tests are run in addition to those discovered in the modules listed intest_labels.This method should return the number of tests that failed.

-

DjangoTestSuiteRunner.setup_test_environment(**kwargs)¶ Sets up the test environment ready for testing.

-

DjangoTestSuiteRunner.build_suite(test_labels, extra_tests=None, **kwargs)¶ Constructs a test suite that matches the test labels provided.

test_labelsis a list of strings describing the tests to be run. A test label can take one of three forms:app.TestCase.test_method– Run a single test method in a test case.app.TestCase– Run all the test methods in a test case.app– Search for and run all tests in the named application.

If

test_labelshas a value ofNone, the test runner should run search for tests in all the applications inINSTALLED_APPS.extra_testsis a list of extraTestCaseinstances to add to the suite that is executed by the test runner. These extra tests are run in addition to those discovered in the modules listed intest_labels.Returns a

TestSuiteinstance ready to be run.

-

DjangoTestSuiteRunner.setup_databases(**kwargs)¶ Creates the test databases.

Returns a data structure that provides enough detail to undo the changes that have been made. This data will be provided to the

teardown_databases()function at the conclusion of testing.

-

DjangoTestSuiteRunner.run_suite(suite, **kwargs)¶ Runs the test suite.

Returns the result produced by the running the test suite.

-

DjangoTestSuiteRunner.teardown_databases(old_config, **kwargs)¶ Destroys the test databases, restoring pre-test conditions.